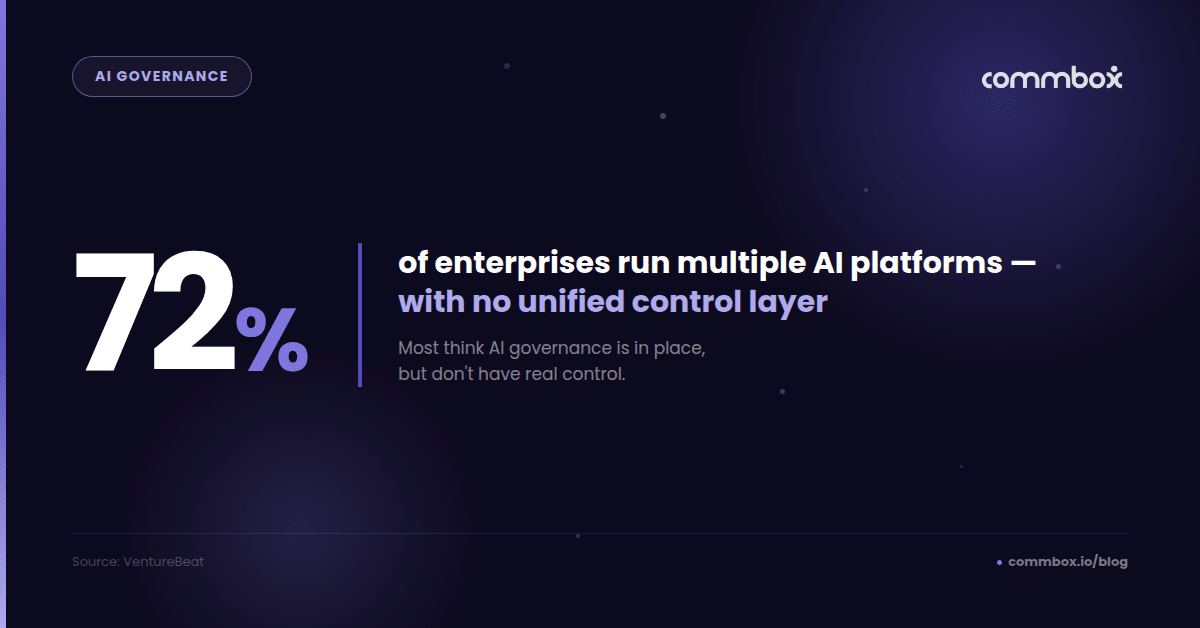

A recent VentureBeat report found that 72% of enterprises believe they have AI governance in place—but most enterprises aren’t running a single AI system—they’re managing multiple platforms, each behaving differently, with no unified way to control them.

AI governance is meant to ensure systems are secure, compliant, and operating within defined boundaries. But in practice, governance often breaks down at scale—especially in customer-facing AI, where decisions happen in real time.

This gap between governance and control is what’s increasingly being described as the “governance mirage”: the belief that AI is managed and secure, when in reality it isn’t.

Why AI Governance Breaks at Scale

The issue isn’t that organizations aren’t thinking about governance. It’s that governance hasn’t kept pace with how AI is actually being deployed.

AI doesn’t live in one place. It spreads across teams, tools, and use cases. Different groups build their own workflows. New agents are added quickly. Systems evolve independently.

Over time, what looks like a governed environment becomes something else entirely—a patchwork of AI systems making decisions in parallel, without a unified way to oversee or control them.

The reason?

Most enterprises aren’t running a single AI system—they’re managing multiple platforms, each behaving differently, with no unified way to control them.

Why Customer-Facing AI Exposes Governance Gaps

Inside the organization, these gaps can go unnoticed for a while. An internal tool behaving inconsistently is rarely critical.

But when AI becomes part of customer engagement, the tolerance for inconsistency disappears.

Now, every response matters. Every decision is visible. And every interaction carries risk—whether that’s compliance exposure, customer frustration, or damage to trust.

In this environment, AI doesn’t just need to sound right. It needs to act correctly, every time.

The Gap Between AI Governance Policies and Real Behavior

A lot of governance today is policy-based. It defines what AI should do.

But in live environments, what matters is what AI actually does.

Without a way to observe and influence behavior in real time, that gap grows quickly.

This is where the signs start to show:

- Limited visibility into AI behavior across systems

- Inconsistent guardrails between tools and teams

- Issues discovered after the fact—often by customers or auditors

This isn’t a capability problem. It’s a coordination problem.

Why More AI Tools Don’t Solve Governance Problems

The natural response has been to add more—more models, more agents, more tools.

But without a way to unify and control them, that only increases complexity.

Each new system introduces its own logic, behaviors, and edge cases. And without a central way to manage them, those differences accumulate.

The result isn’t scalable, and it causes fragmentation.

The Missing Layer in Enterprise AI: Control

What’s becoming clear is that governance alone isn’t enough—at least not in the way it’s traditionally implemented.

What enterprises need is a layer that operates alongside AI in real time. Not just defining rules, but actively enforcing them.

This is where a control layer becomes critical.

A control layer allows organizations to:

- See AI behavior across all interactions

- Apply consistent guardrails across systems

- Intervene when something goes off track

- Maintain a clear audit trail of decisions

It turns governance from a static concept into something operational.

From AI Governance to Operational Control

We’re already seeing a shift across enterprise AI.

From governance as documentation…to governance as execution.

From policies that describe intended behavior…to systems that ensure it.

This shift is especially important in customer-facing environments, where the cost of inconsistency is immediate and visible.

AI Control in Production Matters

The idea that AI is “under control” is often based on how it looks on paper—not how it behaves in practice.

That’s why the governance mirage exists.

And it tends to break at the same moment: when AI starts interacting with customers.

At that point, control is no longer theoretical. It becomes something you either have – or don’t.

And as AI continues to scale, that distinction will matter more than anything else.

Because until AI is controlled in production, scale isn’t an advantage: it’s exposure.

Common Questions About AI Governance

What is AI governance?

AI governance refers to the policies and frameworks used to define how AI systems should operate. However, governance alone does not guarantee control—especially in production environments where AI systems make real-time decisions.

Why does AI governance fail in production?

AI governance often fails in production because it relies on policies rather than real-time control. As AI systems scale across platforms and teams, organizations lose visibility into behavior and struggle to enforce consistent, deterministic guardrails.

What is the difference between AI governance and AI control?

AI governance defines intended behavior, while AI control ensures actual behavior in real time. Governance is policy-based, whereas control requires visibility, guardrails, and the ability to intervene during live interactions.

Why is customer-facing AI riskier?

Customer-facing AI introduces real-time interactions, external visibility, and compliance risk. Without control, issues such as inconsistent responses or lack of auditability become immediately visible and impact customer trust.

How can enterprises control AI systems?

Enterprises can control AI systems by implementing a centralized control layer that provides real-time visibility, enforces guardrails across systems, and enables intervention when AI behavior deviates from expectations.